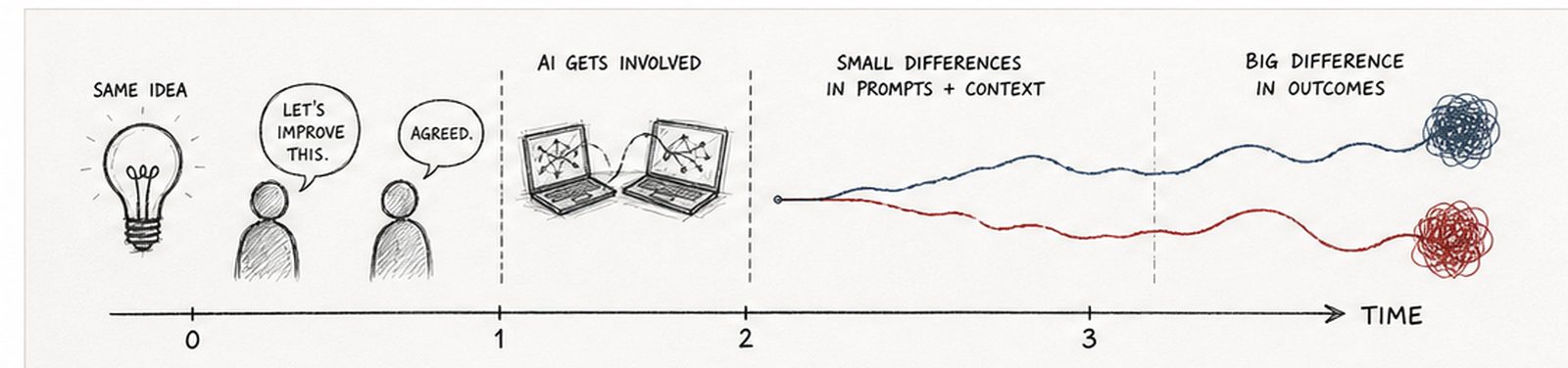

You're working with someone. They run it through their AI. You run it through yours. It feels like collaboration.

It already isn't.

And this isn't just at work. It shows up when you're dating, sharing reels, debating someone on social media, or texting a friend. Some of us are using AI just to check our spelling or to understand something better. Comically, that has quietly introduced a third person into almost every conversation we have.

Not because AI is failing. Because both humans assume their systems are neutral.

They are not.

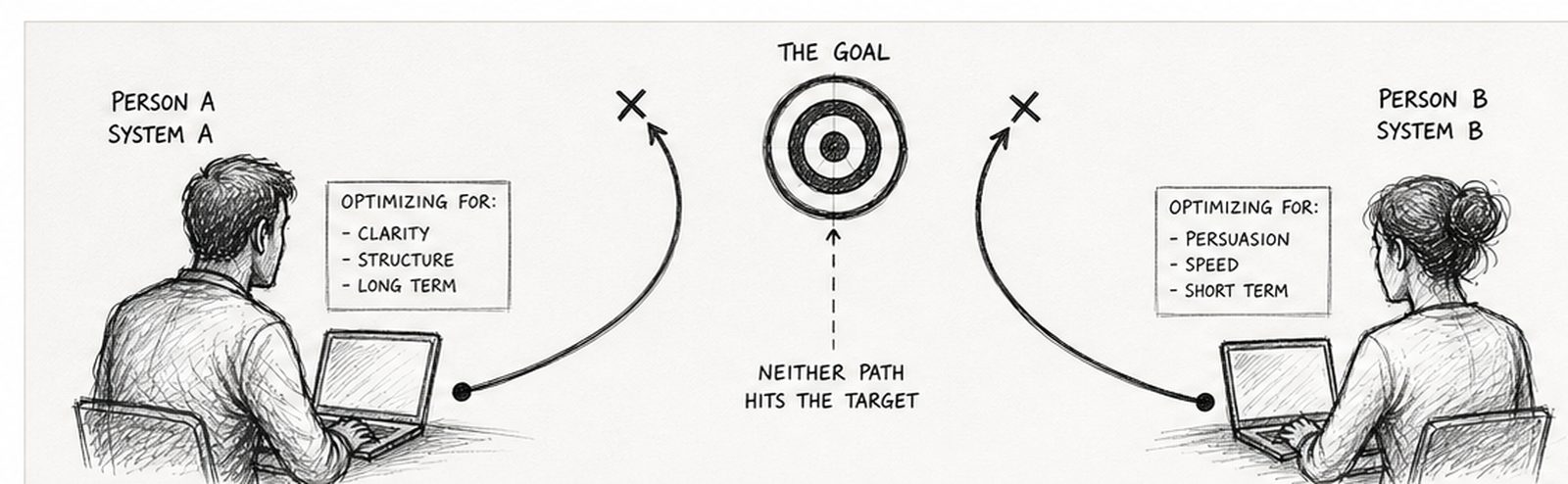

Each system is shaped by prior prompts, framing, context, and each person's definition of "better." One may be optimizing for clarity. Another for persuasion. Another for speed, risk, or financial outcome.

Both people believe they are refining one shared objective. Their systems are validating two different directions.

And no, starting a fresh chat does not fully protect you from this.

The drift is not in the conversation history. It is in the intent behind the prompt.

That is how collaboration turns into parallel validation without anyone realizing it.

This is a measurable phenomenon known as Synthetic Drift.

Want to neutralize most of it? Clear the cache. Properly.

AI does not actually have a cache to clear. Starting a new chat is not enough either. The drift lives in four layers. Start with the easiest. Most people only need the first two.

1. Confirm the target with your human

Before either of you touches AI, say the goal out loud. Have the other person say theirs. If those don't match, you're not collaborating yet. You're parallel-prompting. Align here first. Without this, the rest of the steps work against shifting targets.

2. Open a new chat with a new prompt

Not just a new chat. A new prompt written from the goal you just confirmed, not from how you usually ask. State the target inside the prompt itself: "The goal here is X. Better means Y." This helps redirect the system before it leans on its old assumptions about you.

3. Reset saved memories and custom instructions

Go into Settings in your AI tool. ChatGPT: Personalization → Memory → Manage. Claude: Settings → Profile. Gemini: Activity → Gemini Apps Activity. Delete what doesn't serve the current goal. Most people skip this step. That's why their fresh chats keep producing drifted output.

4. Account-level personalization

You can turn off "improve the model" settings, but you cannot fully see what the platform has inferred about you over time. This layer cannot be cleared. It can only be worked around by being explicit in steps 1 through 3.

For technical teams and power users: shared system prompts.

If you and your collaborator both use tools that support persistent instructions - Cursor, ChatGPT Projects, Claude Projects, custom GPTs, or shared config files - you can move past verbal alignment into structural alignment. Write ONE system prompt together. Load it into both environments. The optimization target is now enforced in the tool itself, not negotiated each session.

This is what software teams already do with shared .cursor configs and team-wide prompt files. The same principle applies to writing partners, research teams, or anyone collaborating with AI in the loop. Shared system prompts turn parallel-prompting into parallel alignment.

Each system is not just improving the idea. It is optimizing for a different hidden objective.

One may prioritize clarity. Another persuasion. Another speed or financial outcome.

Those priorities come from prior prompts and assumptions about what "better" means.

So the idea doesn't stay the same. It shifts.

The systems don't just refine the idea. They redefine what success looks like.

If the system can shift direction here without you noticing, you're being influenced without seeing it clearly.

That's the trap.

It feels like progress because both versions make sense. They sound structured. Logical. Clean.

But they're not solving the same problem anymore.

Research from Stanford's Human-Centered AI Institute shows that small changes in prompts can produce significantly different outputs from the same model.

A study published in Nature Human Behaviour found that algorithmic systems reinforce user perspectives, increasing divergence instead of reducing it.

Research from MIT Media Lab shows users tend to over-trust confident AI outputs even when accuracy varies.

A paper from OpenAI confirms that outputs are highly sensitive to user intent and phrasing.

So two capable people can:

- use AI correctly

- apply logic correctly

- still end up in different places

Because the objective split early.

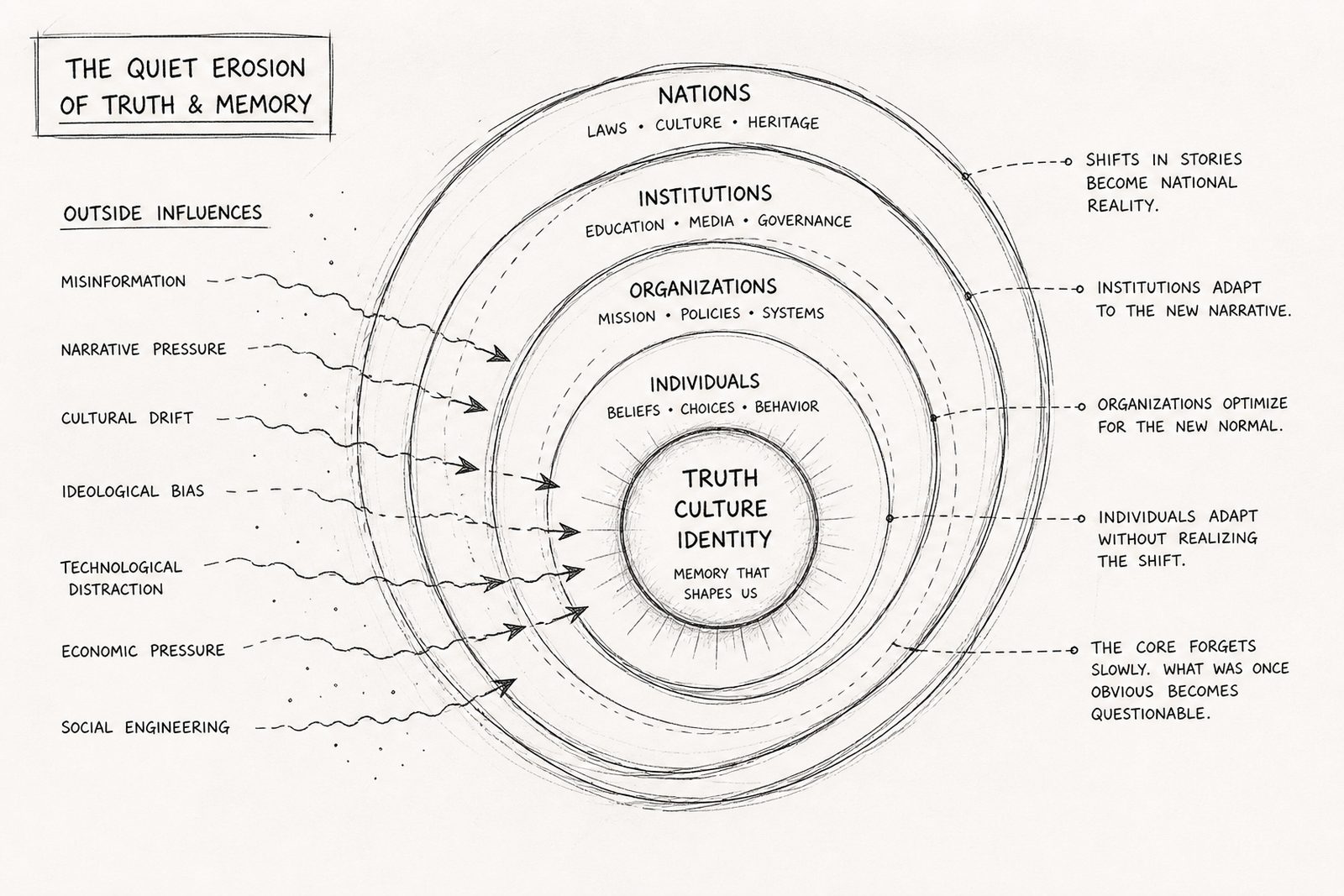

Where this becomes bigger than collaboration.

What you're seeing in real time doesn't stay contained to a conversation.

It shows up in what people remember. In what gets accepted as true. In how beliefs slowly shift. In how hard-earned knowledge gets rewritten over time.

Nothing changes all at once. It adjusts slightly. Then again. Then again.

Until what feels familiar is no longer what it started as.

Recognizing drift is a learned human skill.

Failing to recognize it is what corrupts what gets passed forward.

The same checks that neutralize drift here are the same ones that matter there.

This is why some systems are now being designed to preserve high-integrity records of memory, values, and truth before they are reshaped over time. One example is Digital Legacy AI, which focuses on capturing first-hand human signals as a stable reference layer for families, organizations, institutions, and nations.

Because the goal is not better answers.

It's shared direction.

Where this research goes next.

This field note is part of ongoing behavioral research on Synthetic Drift and its downstream effects across collaboration, decision-making, and what gets passed to the next generation. Future essays will cover parallel-prompting in greater depth, the cognitive cost of optimizing for hidden objectives, and how human alignment becomes the only reliable defense against AI-mediated drift.